Touch. Play. Know.

Imagine just using your finger and an interactive whiteboard to...

- Juxtapose images

- Move images around

- Blend images with a transparency feature

- Extract details

- Erase parts to view an underlying image or replace part of an

existing image

- Flip images across horizontal or vertical axes

- Rotate images through 360 degrees

- Annotate important information or commentary right on the screen

- Scale images to any size you like

- Create new images from existing ones

Nothing like this was possible 12 years ago, and Slavko Milekic was fed up. This MD with another PhD in psychology, currently a professor of Cognitive Science and Digital Design at

the University of the Arts in Philadelphia, says, "I was very frustrated as a computer user, a psychologist and a parent with 'inhumane' interfaces for current computer

applications, especially in the areas of education and art exploration."

He wrote a paper, Creating Child-Friendly Computer Interfaces, and it led to the beginning of a new part of his career. "I was soon approached by a museum to create an

application which would use the principles I advocated. The result was a touch-screen based application at the Speed Art Museum designed for their youngest patrons, allowing them to

explore the museum collection in a child-friendly way: by touching, moving, stretching and throwing objects presented on the screen... Although I made this application more than ten

years ago, it is still in use."

Revolution was the only choice for him. "I developed the software in Revolution (at that time it was called MetaCard) because I am not a programmer and was 'mathematically

challenged' all of my life! I never took any programming or computer science courses (as you know, I am a physician and psychologist). Revolution was the only authoring environment I

could use since it uses English-like scripting language (for example, 'move this object to that location, or, show this, hide that. '). Another big advantage of Revolution was that it

was completely cross-platform - one could use the same code to create applications that would run on any version of Windows or Mac operating systems (even Unix!). Believe it or not, I

am now using Revolution to create custom software for my research, ranging from building gesture-based interfaces to collecting data from eye- and gaze-tracking applications. I also

teach a course called Interactive Media and I am consistently impressed with final projects my students are able to produce after playing with Revolution for only one semester!"

Regarding the use of touchscreens and interactive whiteboards, Dr. Milekic is a firm believer that this is the future of human-computer interface design. Just look at iPod and iPhone

- both use a version of touch-sensitive surface as the main interface. He explains, "Even though research findings indicate that the use of touchscreens is more intuitive and

efficient than mouse- and keyboard-based interactions, they never became

mainstream for a simple reason: lack of adequate interface. For years I have been developing touchscreen-based user interfaces that would be easy to use."

Figure 1. An example interactive surface in a science museum. It presents a giant image of a scientific instrument, readily available for visual exploration and

manipulation of moveable parts. The back side of the instrument can also be examined by 'flipping' the instrument over with a hand gesture.

The basic design of an interactive surface is very simple (see Figure 1.) There is a flat panel that can be mounted on the wall and is capable of detecting touch. Currently, there are

a number of different technologies that are commercially available, and they provide touch-sensitive surfaces ranging in size from 17'' to billboard-size. They differ in the way the

image is generated (front or rear projection, plasma screens, flat panel LCD), and in the technology used for touch detection (capacitive, resistive, Surface Acoustic Wave, optical).

However, the common element is that the image is invariably computer-generated and the interactions with the surface are software driven. This opens up the possibility for the

development of novel and innovative uses of these hardware elements, which are traditionally used for dull but efficient business communication. Here are some suggestions for the

direction of development of more pedagogically oriented interaction design.

Interaction design for an interaction surface follows two principles: a) any presented information has to be manipulable by the user, and b) whenever possible, the properties of the

data displayed should also have a tangible multi-sensory component (see the concept of tangiality via link below). The main interactions with the content occur by active contact with an

interactive surface as a result of body, arm or hand movements.

The potential of the digital technology to make the abstract information not only visible (as, for example, in scientific visualizations of mathematical, astronomical or biological

information), but also manipulable makes it an extremely powerful educational tool. It is in this manner that the digital medium will be used for the proposed installation. The basic

principle is to make access to specific information (like function of an object) accessible through simple exploratory gestures.

There are many possible pedagogical uses of an environment that allows an individual to interact directly and physically, with visually presented information. There are a

couple of "digital tools" that have been already developed as an illustration of the educational potential of touchscreen-based manipulations on an interactive surface.

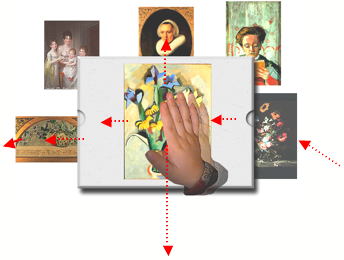

Figure 2. By making objects (in the above example, paintings) movable and 'throwable' it is possible to create a navigational space that will reflect the categories

signified by the objects. In the above illustration, the objects are the paintings in the museum gallery classified into child-friendly categories, like "faces",

"flowers", "outdoors". "Throwing" an object to the right or left lets the child explore objects belonging to one category. In the example above, throwing

an object left or right explores the category of "flowers"; throwing it up brings the category "faces" (portraits) and throwing it down brings the category

"outdoors" (not depicted). (example from KiddyFace installation at the Speed Art Museum in Louisville, KY, designed by Slavko Milekic)

An example of a pedagogical tool that uses physical manipulation of visually presented information is a data base that uses object "throwing" as a novel and intuitive way of

navigation through hierarchically organized spaces. For example, throwing an object in a horizontal plane (left or right) will exchange it for an object belonging to the same category,

while throwing it in a vertical plane will bring an object belonging to a different category (see Figure 2 for an example in an art education program).

A variant of object throwing is throwing away of the whole screen and exchanging it for a new one. Rapid movement (dragging) of the background in the desired direction will lead to

the appearance of the new screen. Using this natural way of navigation, it is possible to design an uncluttered 'buttonless' interface with more space available for the display of

relevant information.

As mentioned previously, touchscreen interactions can be replicated using an interactive white board. Most of them do not require a special stylus and projected images can be

manipulated by finger/hand.

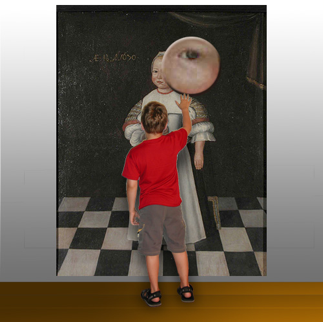

Figure 3. An interactive surface in an art museum, showing a touch-sensitive painting available for visual exploration and manipulation. Shown above is exploration

using a 'magnifying glass' tool.

Although they are also advertised for use in schools, the accompanying software is not using interactive potential of the board except to capture a simulated mouse-click.

The software used with these modules is based on the design principles of the original KiddyFace(TM) environment, which was specifically designed by the author of this proposal for

touch- and movement-based interactions.

The basic philosophy behind KiddyFace(TM) software is that, in order to comprehend certain concepts it is necessary to build a library of experiences through interactions illustrative

of a particular concept. This approach is currently widely used for discovery-based learning. The interactions in KiddyFace(TM) environment are based on direct manipulation of visually

represented objects. The advantage of this approach is that direct manipulation does not have to be learned -- pointing to, grabbing and dropping the objects are some of the earliest

forms of interaction with the world. The interactions supported in KiddyFace(TM) environment include:

- selection (pointing to and touching with a finger an object on the screen);

- moving (dragging the selected object with a finger or hand across the screen)

- dropping (lifting the finger off the screen when object is brought to a desired location);

More complex interactions, available in specific contexts, include:

- object modification (by double-tapping on an object, it enters into 'modifiable mode', indicated by a red outline). Depending on the context the modification of objects can also

take different forms - it can be the change in relative size of an object, its transparency, rotation angle, etc. When in this mode, an object may be used in a simulation of its real

use, for example, nocturnal can be used to calculate

- object throwing: an object can be literally thrown off the screen if it is rapidly moved in one direction and suddenly released. Moving an object at normal speed will not elicit

throwing. To throw an object it is necessary to achieve certain speed and 'momentum'.

Although a simple idea, object throwing transcends the traditional notions of human-computer interactions. Being a natural action, it offers a wide range of contextually obvious

outcomes (for example, moving something out of the way, throwing it away, exchanging an object for another one, etc.) that don't have to be specifically taught.

After 12 years of development, Jennifer McAleese saw Dr. Milekic's work. "I was wowed by the software... it was simple not only to understand, but it was easy to use." After

16 years on Wall Street, she was interested in products that would not only make money but would help people, especially children. "I asked him, which aspect of your technology do

you think we could commercialize? I saw his face light up when he knew the exact answer: a presentation tool that allows direct manipulation of visual content. He said that this

product was not on the market yet. Given his answer and his passion, I knew this was the beginning of a business relationship."

Together they formed FlatWorld Interactives, LLC. McAleese says, "The basis of our common philosophy is that we strongly believe in equal access to education, interactive

learning with new technologies and the development of products which are good for kids."

Dr. Milekic's innovative, intuitive, user-friendly applications have been implemented in museum settings: The Clay Center in West Virginia, The Speed Museum in Kentucky and The

Phoenix Art Museum in Arizona. He is featured in the PBS series Art to Heart which highlights the importance of children's interactive and experiential learning using his child-friendly,

digital application called KiddyFace at The Speed Museum in Kentucky. His work has been included in Berlin's Science & Arts Research Labs Exhibit (SARLE '04) and supported by the Museums

and the Web International Conference in 2006 where he presented his interactive gesture-based browser (available through www.MIOculture.com). In 2005 Dr. Milekic was granted a US patent

for an original way of interacting with information on a visual display.

FWI focuses on two goals: creation and distribution of the presentation software (called ShowMe Tools), and consulting projects to create custom solutions for their clients. All of

their software is cross-platform, and it really springs to life with an interactive whiteboard.

ShowMe Tools can be best described as software tools that allow real-time manipulation of visual content. This brings incredible flexibility to the presenter, who can actively direct

the course of demonstration and respond to audience feedback and questions.

Art education was the original focus. However, as the software has been refined, the potential market has expanded, into all areas of education and business. It's simple, intuitive

and affordable. It's so easy to use that there's no manual, and it's a lively alternative to the standard passive slide show.

Their consulting service goes beyond the first version of ShowMe Tools to include the following which are not yet commercially available as downloadable product offerings. Each tool

is used with the client's content.

- Their signature tool is the Talking Magnifying Glass, highlighting specifics of an image while describing the details.

- Puzzle Formation allows an image to fall apart and be magnetically reconfigured.

- 3D Object Rotation enables the audience to view all sides of an object as it rotates.

- Palette Formation recreates the colors and painting process of an image.

- The Memory Game.

- Keyhole Exploration explores an image while focusing on various details within the image.

- Guided Exploration: with a spotlight, use and record a specific exploration path.

McAleese notes, "It's interesting that he codes in a simple to use authoring environment to design simple, intuitive, user-friendly applications."

Dr. Milekic is putting the finishing touches on ShowMe Tools before it's available for download. If you'd like to be notified of the product launch and other FWI news, visit them at

http://www.flatworldinteractives.com.

Slavko Milekic is an artist, cognitive scientist and Revolution programmer. His research focuses on alternative ways of interacting with digital content including touch, voice,

gestures and eye- and gaze-tracking. He is a professor of Cognitive Science & Digital Design at the University of the Arts in Philadelphia and Creative Director for FlatWorld

Interactives. (smilekic@uarts.edu)

Jennifer McAleese is the co-founder and Managing Director of FlatWorld Interactives (FWI). She is also the Director of Kids knowMore, a non profit organization affiliated with FWI to

provide equal access to education for children in inner cities through the donation of interactive whiteboards and FWI's interactive educational software applications. Beginning in 1985,

she had an extensive Wall Street career in New York and Philadelphia, most recently as a Vice President in global equities for Citigroup. As an Institutional Equity Research Salesperson

she was responsible for the delivery of investment advice and research analysis of global equities to U.S. institutional investors. Within Citigroup's Institutional Equity Sales

division, she recently designed and coordinated the professional development mentoring program for junior associates.

Links:

FlatWorld Interactives, LLC: http://www.flaworldinteractives.com (under construction)

Slavko Milekic, MD, PhD: www.uarts.edu/faculty/smilekic

Milekic, S. (2002) Towards Tangible Virtualities: Tangialities, in Bearman, D., Trant, J. (eds.) Museums and the Web 2002: Selected Papers from an International Conference, Archives &

Museum Informatics, Pittsburgh http://www.archimuse.com/mw2002/papers/milekic/milekic.html

PBS series "Art to Heart" DVD: www.ket.org/arttoheart

Video clips of children at the Philadelphia School using Dr Milekic's software:

www.flatworldinteractives.com/movies/interactive_whiteboard_web.wmv

Dr. Milekic's Axes Station technology:

Available through www.MIOculture.com

|